Members: Petia Radeva, Mariella Dimiccoli, Marc Bolaños, Gabriel de Oliveira, Maedeh Aghaei, Estefania Talavera, Alejandro Cartas

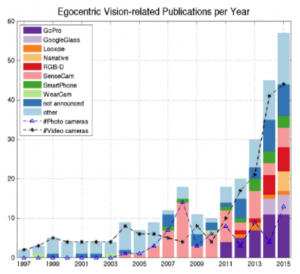

Visual lifelogging consists of acquiring images that capture the daily experiences of the user by wearing a camera over a long period of time. The pictures taken offer considerable potential for knowledge mining concerning how people live their lives, hence, they open up new opportunities for many potential applications in fields including healthcare, security, leisure and the quantified self. Egocentric vision or first-person camera vision refers to all Computer Vision and Machine Learning methods to extract semantic information from visual lifelogging data. This field received an exponentially growing interest last few years that can be appreciated from the following statistics of published papers on images acquired with different wearable cameras.

References:

- Marc Bolaños, Mariella Dimiccoli, Petia Radeva: Towards Storytelling from Visual Lifelogging: An Overview. CoRR abs/1507.06120 (2015)

Within our visual lifelogging project, we tackle the following egocentric (first-person camera) vision problems:

TEMPORAL SEGMENTATION, SUMMARIZATION AND KEY-FRAME EXTRACTION

While wearable cameras are becoming increasingly popular, locating relevant information in large unstructured collections of egocentric images is still a tedious and time consuming process. This work addresses the problem of organizing egocentric photo streams acquired by a wearable camera into semantically meaningful segments, hence making an important step towards the goal of automatically annotating these photos for browsing and retrieval. In the proposed method, first, contextual and semantic information is extracted for each image by employing a Convolutional Neural Networks approach. Later, a vocabulary of concepts is defined in a semantic space by relying on linguistic information. Finally, by exploiting the temporal coherence of concepts in photo streams, images which share contextual and semantic attributes are grouped together. The resulting temporal segmentation is particularly suited for further analysis, ranging from event recognition to semantic indexing and summarization. Experimental results over egocentric set of nearly 31,000 images, show the prominence of the proposed approach over state-of-the-art methods.

While wearable cameras are becoming increasingly popular, locating relevant information in large unstructured collections of egocentric images is still a tedious and time consuming process. This work addresses the problem of organizing egocentric photo streams acquired by a wearable camera into semantically meaningful segments, hence making an important step towards the goal of automatically annotating these photos for browsing and retrieval. In the proposed method, first, contextual and semantic information is extracted for each image by employing a Convolutional Neural Networks approach. Later, a vocabulary of concepts is defined in a semantic space by relying on linguistic information. Finally, by exploiting the temporal coherence of concepts in photo streams, images which share contextual and semantic attributes are grouped together. The resulting temporal segmentation is particularly suited for further analysis, ranging from event recognition to semantic indexing and summarization. Experimental results over egocentric set of nearly 31,000 images, show the prominence of the proposed approach over state-of-the-art methods.

Building a visual summary from an egocentric photostream captured by a lifelogging wearable camera is of high interest for different applications (e.g. memory reinforcement). In this work, we propose a new summarization method based on keyframes selection that uses visual features extracted by means of a convolutional neural network. Our method applies an unsupervised clustering for dividing the photostreams into events, and finally extracts the most relevant keyframe for each event. We assess the results by applying a blind-taste test on a group of 20 people who assessed the quality of the summaries.

References:

- M. Dimiccoli, M. Bolaños, E. Tavalera, M. Aghaei, S. Nikolov, P. Radeva. Semantic Regularized Clustering for Egocentric Photo Streams Segmentation. To appear in Computer Vision and Image Understanding, 2016.

- M. Dimiccoli, H. Xu, P. Radeva A cognitive-based model for event learning. Women in Machine Learning Workshop (WIML), in conjunction with the International Conference on Neural Information Processing Systems (NIPS), December 2016, Barcelona, Spain.

- Estefanía Talavera, Mariella Dimiccoli, Marc Bolaños, Maedeh Aghaei, Petia Radeva: R-Clustering for Egocentric Video Segmentation. IbPRIA 2015: 327-336

- Marc Bolaños, Ricard Mestre, Estefanía Talavera, Xavier Giró i Nieto, Petia Radeva: Visual summary of egocentric photostreams by representative keyframes. ICME Workshops 2015: 1-6

- Aniol Lidon, Marc Bolaños, Mariella Dimiccoli, Petia Radeva, Maite Garolera, Xavier Giró i Nieto: Semantic Summarization of Egocentric Photo Stream Events. CoRR abs/1511.00438 (2015)

- Marc Bolaños, Maite Garolera, Petia Radeva: Video Segmentation of Life-Logging Videos. AMDO 2014: 1-9

EGOCENTRIC SOCIAL INTERACTION ANALYSIS

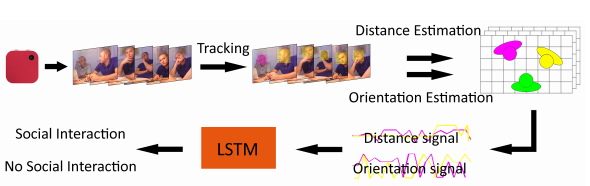

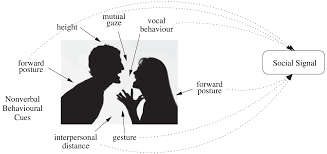

Given a user wearing a low frame rate wearable camera during a day, this work aims to automatically detect the moments when the user gets engaged into a social interaction solely by reviewing the automatically captured photos by the worn camera. The proposed method, inspired by the sociological concept of F-formation, exploits distance and orientation of the appearing individuals -with respect to the user- in the scene from a bird-view perspective. As a result, the interaction pattern over the sequence can be understood as a two-dimensional time series that corresponds to the temporal evolution of the distance and orientation features over time. A Long-Short Term Memory-based Recurrent Neural Network is then trained to classify each time series. Experimental evaluation over a dataset of 30.000 images has shown promising results on the proposed method for social interaction detection in egocentric photo-streams.

References:

- M. Aeghai, M. Dimiccoli, P. Radeva. Extended Bag-of-Tracklets for Multi-Face Tracking in Egocentric Photo Streams. Computer Vision and Image Understanding, Volume 149, 146-156, 2016. Special Issue on Assistive Computer Vision and Robotics, Elsevier, 2016

- M. Aghaei, M. Dimiccoli, P. Radeva With Whom Do I Interact? Detecting Social Interactions in Egocentric Photo Streams. To be presented at the International Conference on Pattern Recognition (ICPR), December 2016, Cancun, Mexic.

- M. Aghaei, M. Dimiccoli, P. Radeva Towards Social Interaction Detection in Egocentric Photo Streams. Workshop on Egocentric (first-person) Vision, in conjunction with the Computer Vision and Pattern Recognition Conference (CVPR), June 2016, Las Vegas, USA.

- M. Aghaei, M. Dimiccoli, P. Radeva Towards Social Interaction Detection in Egocentric Photo Streams. Proceeding of International Conference on Machine Vision (ICMV), November 2015, Barcelona, Spain.

EGO-OBJECT DISCOVERY

Lifelogging devices are spreading faster everyday. This growth can represent great benefits to develop methods for extraction of meaningful information about the user wearing the device and his/her environment. In this work, we propose a semi-supervised strategy for easily discovering objects relevant to the person wearing a first-person camera. Given an egocentric video/images sequence acquired by the camera, our algorithm uses both the appearance extracted by means of a convolutional neural network and an object refill methodology that allows to discover objects even in case of small amount of object appearance in the collection of images. An SVM filtering strategy is applied to deal with the great part of the False Positive object candidates found by most of the state of the art object detectors. We validate our method on a new egocentric dataset of 4912 daily images acquired by 4 persons as well as on both PASCAL 2012 and MSRC datasets. We obtain for all of them results that largely outperform the state of the art approach. We make public both the EDUB dataset1 and the algorithm code.

References:

- Marc Bolaños, Petia Radeva: Ego-object discovery. CoRR abs/1504.01639 (2015)

- Marc Bolaños, Maite Garolera, Petia Radeva: Object Discovery Using CNN Features in Egocentric Videos. IbPRIA 2015: 67-74

International Workshop on Social Signal Processing and Beyond

**********************************************************************

http://www.ub.edu/cvub/SSPandBE/index.html

September 11, 2017, Catania, Italy

in association with ICIAP 2017 (http://www.iciap2017.com/)

International Workshop on Social Signal Processing and Beyond

**********************************************************************

http://www.ub.edu/cvub/SSPandBE/index.html

September 11, 2017, Catania, Italy

in association with ICIAP 2017 (http://www.iciap2017.com/) Given the increasing quantities of personal data being gathered by individuals, the concept of a digital library of rich multimedia and sensory content for every individual is becoming a reality and fast becoming a mainstream topic for multimedia research. This is often referred to as lifelogging and there are significant challenges to be addressed in the area, concerning the gathering, enriching, searching and accessing of lifelog data.

Given the increasing quantities of personal data being gathered by individuals, the concept of a digital library of rich multimedia and sensory content for every individual is becoming a reality and fast becoming a mainstream topic for multimedia research. This is often referred to as lifelogging and there are significant challenges to be addressed in the area, concerning the gathering, enriching, searching and accessing of lifelog data. In recent years, deep learning has achieved remarkable results in fields such as: computer vision, speech recognition and natural language processing. This DL revolution is slowly reaching the challenging problems of the medical domain, opening the doors for personalized medicine. Medical domain is characterized by high variability of data including text, imaging, and genomic data. In this talk, we will present recent advances in two domains of medical data: imaging and genomics.

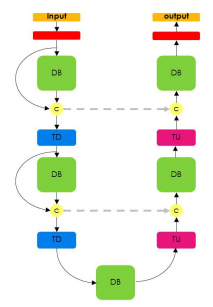

First, we will introduce a simple, yet powerful pipeline for medical image segmentation that combines Fully Convolutional Networks (FCNs) with Fully Convolutional Residual Networks (FC-ResNets). We propose and examine a design that takes particular advantage of recent advances in the understanding of both Convolutional Neural Networks as well as ResNets. Our approach focuses upon the importance of a trainable pre-processing when using FC-ResNets and we show that a low-capacity FCN model can serve as a pre-processor to normalize medical input data. We show that using this pipeline, we exhibit state-of-the-art performance on the challenging Electron Microscopy benchmark, when compared to other 2D methods. We improve segmentation results on CT images of liver lesions, when contrasting with standard FCN methods. Moreover, when applying our 2D pipeline on a challenging 3D MRI prostate segmentation challenge we reach results that are competitive even when compared to 3D methods. The obtained results illustrate the strong potential and versatility of the pipeline by achieving highly accurate results on multi-modality images from different anatomical regions and organs.

Second, we will introduce a novel deep learning architecture to address the challenges posed by genomic data, where the number of input features can be orders of magnitude larger than the number of training examples, making it difficult to avoid overfitting, even when using the known regularization techniques. Improving the ability of deep learning to handle such datasets could have an important impact in medical research, more specifically in precision medicine, where high-dimensional data regarding a particular patient is used to make predictions of interest. We propose a novel neural network parameterization, that we call Diet Networks, which considerably reduces the number of free parameters in the model. The Diet Networks parametrization is based on the idea that we can first learn or provide an embedding for each input feature and then learn how to map a feature's representation to the parameters linking the value of the feature to each of the hidden units of the classifier network. We experiment on a population stratification task of interest to medical studies and show that the proposed approach can significantly reduce both the number of parameters and the error rate of the classifier. This work was accepted at ICLR 2017.

In recent years, deep learning has achieved remarkable results in fields such as: computer vision, speech recognition and natural language processing. This DL revolution is slowly reaching the challenging problems of the medical domain, opening the doors for personalized medicine. Medical domain is characterized by high variability of data including text, imaging, and genomic data. In this talk, we will present recent advances in two domains of medical data: imaging and genomics.

First, we will introduce a simple, yet powerful pipeline for medical image segmentation that combines Fully Convolutional Networks (FCNs) with Fully Convolutional Residual Networks (FC-ResNets). We propose and examine a design that takes particular advantage of recent advances in the understanding of both Convolutional Neural Networks as well as ResNets. Our approach focuses upon the importance of a trainable pre-processing when using FC-ResNets and we show that a low-capacity FCN model can serve as a pre-processor to normalize medical input data. We show that using this pipeline, we exhibit state-of-the-art performance on the challenging Electron Microscopy benchmark, when compared to other 2D methods. We improve segmentation results on CT images of liver lesions, when contrasting with standard FCN methods. Moreover, when applying our 2D pipeline on a challenging 3D MRI prostate segmentation challenge we reach results that are competitive even when compared to 3D methods. The obtained results illustrate the strong potential and versatility of the pipeline by achieving highly accurate results on multi-modality images from different anatomical regions and organs.

Second, we will introduce a novel deep learning architecture to address the challenges posed by genomic data, where the number of input features can be orders of magnitude larger than the number of training examples, making it difficult to avoid overfitting, even when using the known regularization techniques. Improving the ability of deep learning to handle such datasets could have an important impact in medical research, more specifically in precision medicine, where high-dimensional data regarding a particular patient is used to make predictions of interest. We propose a novel neural network parameterization, that we call Diet Networks, which considerably reduces the number of free parameters in the model. The Diet Networks parametrization is based on the idea that we can first learn or provide an embedding for each input feature and then learn how to map a feature's representation to the parameters linking the value of the feature to each of the hidden units of the classifier network. We experiment on a population stratification task of interest to medical studies and show that the proposed approach can significantly reduce both the number of parameters and the error rate of the classifier. This work was accepted at ICLR 2017. Open Postdoctoral fellowship/s in the Dept. de Matemàtiques i Informàtica at Universitat de Barcelona.

The Dept. de Matemàtiques i Informàtica (Mathematics and Computer Science) is looking for and willing to support excellent postdoctoral researchers in the fields of Machine Learning, Computer Vision and Human-Computer Interaction who are interested in applying for a Beatriu de Pinós (BP) 2016 fellowship so as to conduct a two-year postdoc at Universitat de Barcelona.

The purpose of the Beatriu de Pinós programme is to award 60 individuals grants for the hiring and incorporation of postdoctoral research staff into the Catalan science and technology system. These grants are designed for the incorporation of young researchers (who obtained their PhD between 2007 and 2014 and have not resided or worked in Spain for more than 12 months in the three years prior to date of submission of the application), so that they can improve their professional prospects and obtain an independent research position. Candidates must carry out a research and training project for the entire period of the grant, one that will allow them to progress in the development of their professional careers. Please check the website of the BP programme* for further information about this fellowship.

Some of the specific projects, we are working, include:

+ Machine Learning: Deep Learning for time series analysis, Supervised Online Learning Algorithms, Bayesian statistics and deep learning.

+ Computer Vision: Visual Lifelogging and Egocentric Vision, Neuroimage processing, Computer Vision for Food Analysis, Deep learning and Image Aesthetics, Ultrasound image analysis.

+ Human-Computer Interaction: ageing / older people, interfaces for people with mild dementia or with aphasia, universal design of STEM documents

Deadline: 0112/2016.

For further information about this postdoctoral opportunity please feel free to contact us:

Petia Radeva (petia.ivanova@ub.edu or radevap@gmail.com),

www.ub.edu/cvub,

www.cvc.uab.es/people/petia

Open Postdoctoral fellowship/s in the Dept. de Matemàtiques i Informàtica at Universitat de Barcelona.

The Dept. de Matemàtiques i Informàtica (Mathematics and Computer Science) is looking for and willing to support excellent postdoctoral researchers in the fields of Machine Learning, Computer Vision and Human-Computer Interaction who are interested in applying for a Beatriu de Pinós (BP) 2016 fellowship so as to conduct a two-year postdoc at Universitat de Barcelona.

The purpose of the Beatriu de Pinós programme is to award 60 individuals grants for the hiring and incorporation of postdoctoral research staff into the Catalan science and technology system. These grants are designed for the incorporation of young researchers (who obtained their PhD between 2007 and 2014 and have not resided or worked in Spain for more than 12 months in the three years prior to date of submission of the application), so that they can improve their professional prospects and obtain an independent research position. Candidates must carry out a research and training project for the entire period of the grant, one that will allow them to progress in the development of their professional careers. Please check the website of the BP programme* for further information about this fellowship.

Some of the specific projects, we are working, include:

+ Machine Learning: Deep Learning for time series analysis, Supervised Online Learning Algorithms, Bayesian statistics and deep learning.

+ Computer Vision: Visual Lifelogging and Egocentric Vision, Neuroimage processing, Computer Vision for Food Analysis, Deep learning and Image Aesthetics, Ultrasound image analysis.

+ Human-Computer Interaction: ageing / older people, interfaces for people with mild dementia or with aphasia, universal design of STEM documents

Deadline: 0112/2016.

For further information about this postdoctoral opportunity please feel free to contact us:

Petia Radeva (petia.ivanova@ub.edu or radevap@gmail.com),

www.ub.edu/cvub,

www.cvc.uab.es/people/petia

Petia Radeva has been invited speaker to the workshop “Humanitarian and social science: from the university to the enterprise” 17 of November, 2016, Faculty of Filology, University of Barcelona.

Petia Radeva has been invited speaker to the workshop “Humanitarian and social science: from the university to the enterprise” 17 of November, 2016, Faculty of Filology, University of Barcelona. Best paper award at CIAPR'2016 to our paper: "Deep Learning Features for Wireless Capsule Endoscopy Analysis",

by Santi Segui, Michal Drozdzal, Guillem Pascual, Carolina Malagelada, Fernando Azpiroz, Petia

Radeva and Jordi Vitrià, Lima Perú, 2016.

Best paper award at CIAPR'2016 to our paper: "Deep Learning Features for Wireless Capsule Endoscopy Analysis",

by Santi Segui, Michal Drozdzal, Guillem Pascual, Carolina Malagelada, Fernando Azpiroz, Petia

Radeva and Jordi Vitrià, Lima Perú, 2016. Petia Radeva received the International CIARP Award "Aurora Pons Porrata" in recognition of an outstanding technical contribution to the field of pattern recognition, data mining and related areas, 2016.

Petia Radeva received the International CIARP Award "Aurora Pons Porrata" in recognition of an outstanding technical contribution to the field of pattern recognition, data mining and related areas, 2016. We got the Mention prize (II place) in the DKV competence with our App for Automatic Food Recognition for Healthy Habits Promotion! Congratulations, team!!!

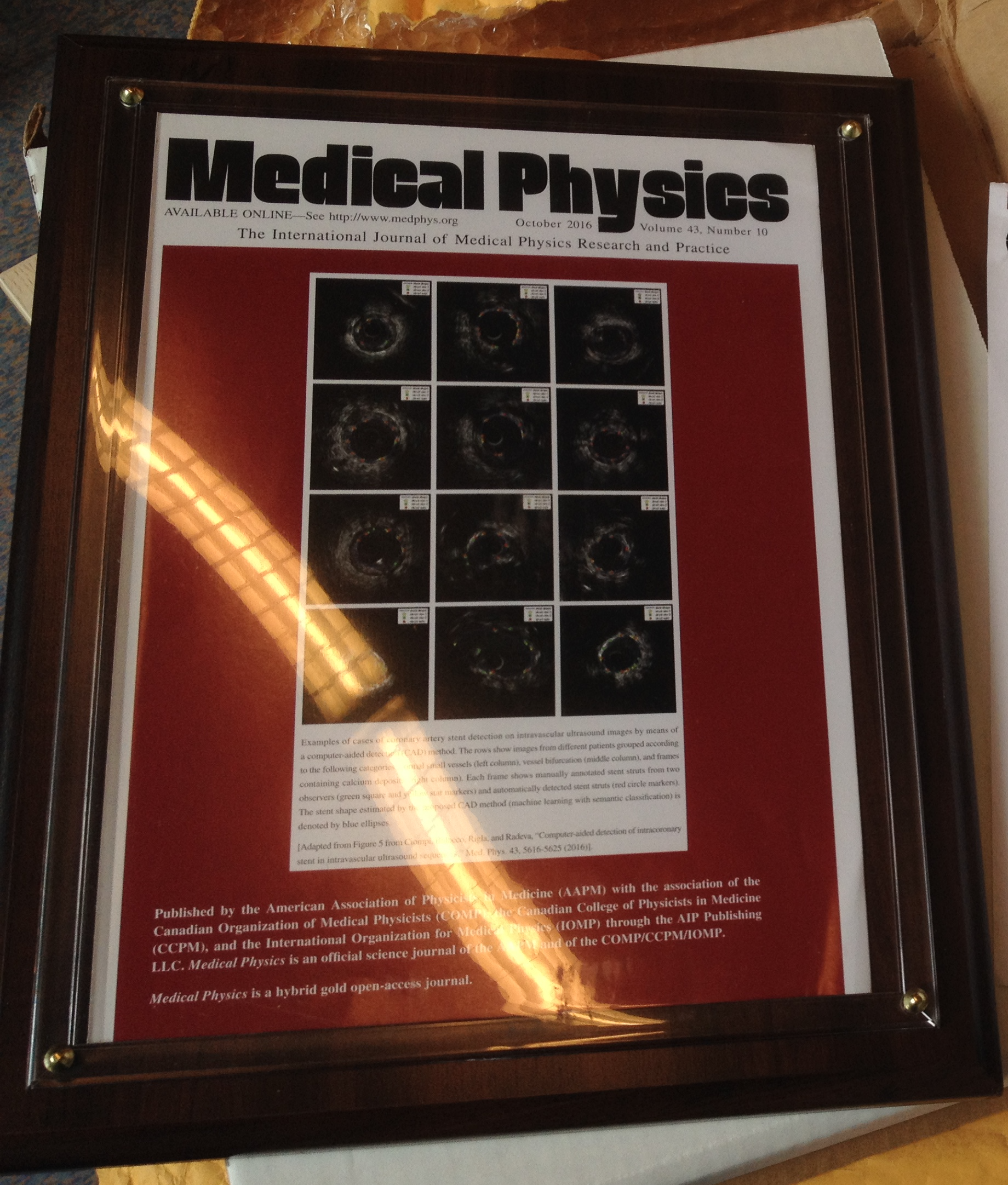

We got the Mention prize (II place) in the DKV competence with our App for Automatic Food Recognition for Healthy Habits Promotion! Congratulations, team!!! The journal Medical Physics chose figures of our work on Stent analysis to use as a cover on their journal. http://www.medphys.org

The journal Medical Physics chose figures of our work on Stent analysis to use as a cover on their journal. http://www.medphys.org Petia Radeva gave a plenary talk "Can Deep Learning and Egocentric Vision for Visual Lifelogging help us eat better?" at the CCIA'2016, organized by Xavier Binefa, UPF, Barcelona, Spain.

Petia Radeva gave a plenary talk "Can Deep Learning and Egocentric Vision for Visual Lifelogging help us eat better?" at the CCIA'2016, organized by Xavier Binefa, UPF, Barcelona, Spain. The prestigous GRADIANT award was assifned to Beatriz Remeseiro for the best PhD thesis applied to the ICT sector 2016.

The prestigous GRADIANT award was assifned to Beatriz Remeseiro for the best PhD thesis applied to the ICT sector 2016. 3 abstracts accepted at the NIPS Workshop WiML'16. Congratulations, Mariella, Maya and Beatriz!

3 abstracts accepted at the NIPS Workshop WiML'16. Congratulations, Mariella, Maya and Beatriz!

On 8 of February, 2016

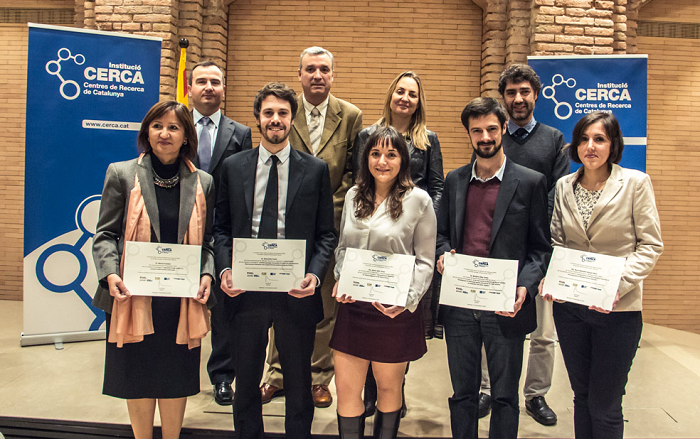

On 8 of February, 2016  Michal Drozdzal is one of the 5 researchers who received the Pioneer Award for her doctoral thesis "Sequential image analysis for computer-aided endoscopi wireless". This is the second edition of the competition promoted by CERCA. This year a total of nineteen researchers (10 males and 9 females) participated from thirteen centres nearby. By areas, there have been 3 science projects; 7 of medical sciences and health; 3 of 6 engineering and life sciences. The jury appreciated the advanced technology that solves problems linked to the endoscopy with a significant population component and a business link which enables a good transfer of knowledge generated.

Michal Drozdzal is one of the 5 researchers who received the Pioneer Award for her doctoral thesis "Sequential image analysis for computer-aided endoscopi wireless". This is the second edition of the competition promoted by CERCA. This year a total of nineteen researchers (10 males and 9 females) participated from thirteen centres nearby. By areas, there have been 3 science projects; 7 of medical sciences and health; 3 of 6 engineering and life sciences. The jury appreciated the advanced technology that solves problems linked to the endoscopy with a significant population component and a business link which enables a good transfer of knowledge generated. Re-memory: Cognitive Training based on autobiograohic records to exercise memory

Exposition “+HUMANS”, 17 December 2015, CCB, Barcelona Spain

The majority of patients with mild cognitive impairment develop dementia and Alzheimer's disease. Re-memory, a project financed by the Foudation “La Marató de TV3”, study a new entrenament cognitiu based on the concept of lifelogging to exrcise memory. Re-memory works in the following way: the patient carries a camera that capture automatically images from all locations visited, events in which the wearer participates, the activities that he/she participated and persons he/she interacted with. Inspired in the use of wearable cameras for first cases of amnesia, els membres of the Re-memory project create a cogntive program for training based on the autobiographic reexperimenting to positively impact the cognition and improve the memory and function of peoplewith mild cognitive impairment.

Re-memory: Cognitive Training based on autobiograohic records to exercise memory

Exposition “+HUMANS”, 17 December 2015, CCB, Barcelona Spain

The majority of patients with mild cognitive impairment develop dementia and Alzheimer's disease. Re-memory, a project financed by the Foudation “La Marató de TV3”, study a new entrenament cognitiu based on the concept of lifelogging to exrcise memory. Re-memory works in the following way: the patient carries a camera that capture automatically images from all locations visited, events in which the wearer participates, the activities that he/she participated and persons he/she interacted with. Inspired in the use of wearable cameras for first cases of amnesia, els membres of the Re-memory project create a cogntive program for training based on the autobiographic reexperimenting to positively impact the cognition and improve the memory and function of peoplewith mild cognitive impairment.

Our work on using Deep Learning techniques for endoluminal image analysis was presented as example at NVIDIA’s GPU Technical Conference (http://www.gputechconf.com/).

Our work on using Deep Learning techniques for endoluminal image analysis was presented as example at NVIDIA’s GPU Technical Conference (http://www.gputechconf.com/).